machine learning ·unified views ·bayesian

Most probabilistic models are one model in costumes

PCA, factor analysis, logistic regression, Gaussian mixtures, HMMs, and Kalman filters are the same probabilistic graphical model with different independence assumptions. Seeing this gives you one inference recipe that handles all of them.

Most of what we call “machine learning” is a small vocabulary of probabilistic models written out in a common language. PCA, factor analysis, logistic regression, Gaussian mixtures, hidden Markov models, and Kalman filters are not separate inventions. They are the same object (a joint distribution over observed and hidden variables) with different independence assumptions.

Once you learn to read the language, two things become easier. You stop memorizing models and start composing them, and you inherit one inference recipe, message passing, that works across all of them.

The question every model answers

A probabilistic model is a joint distribution over observed data and hidden variables . Every modeling task reduces to one question: given the data, what do we believe about the hidden parts? Formally, compute the posterior by Bayes’ rule:

That is the whole program. Choose how generates (the likelihood), choose what you believe about before seeing data (the prior), and the math prescribes what to believe after seeing data (the posterior).

What varies across models is not this question. It is the structure of the joint: which variables depend on which, and how. That structure is a graph.

The graph factors the joint

Writing the full joint over variables is a table of size exponential in . What rescues us is conditional independence. In most sensible models, each variable depends directly on only a few others. Graphical models make that structure visible: nodes are variables, edges encode dependence, and the graph dictates how the joint factors into small local pieces.

Three patterns of three nodes are enough to reason about any graph:

- Chain (). Given , and are independent. The grandparent stops mattering once you know the parent.

- Fork (). Given , and are independent. Siblings are conditionally independent given their common cause.

- Collider (). Given , and may become dependent, even if they were marginally independent. This is the “explaining away” pattern.

These three cases generalize into an algorithm (Bayes-ball) that decides independence in any graph by a reachability test on shaded observed nodes. Hammersley and Clifford’s theorem then says something stronger: the set of joints satisfying a given set of independences is exactly the set that factors according to the graph. Checking independences is the same thing as factoring the joint.

One algorithm: message passing

Once you have a factored joint, computing means marginalizing over everything except and . A naive nested sum is exponential. The same factoring that gave us the graph lets us reorder the sums so that terms that do not depend on the current summation variable come outside of it. This is the elimination algorithm, and it is just dynamic programming.

Doing the same work locally by letting each node send a summary to its neighbours gives message passing. Two rules suffice:

- At a variable node, multiply the incoming messages and sum over the variable’s value.

- At a factor node, multiply by the local factor and marginalize.

For trees, two passes (leaves to root, then root to leaves) produce every

marginal exactly. The forward-backward algorithm for HMMs, the Kalman

smoother, and belief propagation on loopy graphs are all this same recipe

on different graphs. Viterbi decoding is the same algorithm with max in

place of sum.

Seeing message passing once makes several other algorithms fall into place. EM is message passing interleaved with a parameter update. Backpropagation is message passing on a computational graph (there is a companion post that unpacks this). Variational inference is message passing where you have replaced intractable messages with tractable approximations.

What this buys you

Graphical models stopped being the headline in the mid-2010s because the headline moved to neural networks. The framing did not go away though. It moved inside the networks.

- A VAE is a Bayes net with a Gaussian prior on , a neural likelihood , and a variational approximation to . Training maximizes a lower bound (the ELBO) that is a message-passing expression in disguise.

- A diffusion model is a chain of Gaussians, reverse-engineered by learning the backward messages.

- A graph neural network is message passing over a graph, where the messages are learned rather than derived.

- Score-based, flow-based, and energy-based models all sit inside the same frame.

The framing is useful not because it makes these methods “really” PGMs in some strong sense, but because it tells you what choice you are making at each design step. What is the graph? What are the independence assumptions? Which marginals do you want? How are you approximating the intractable ones? Those are the decisions that matter. The rest is engineering.

A short reading list

- Pearl, Probabilistic Reasoning in Intelligent Systems (1988), the founding text and still the best place to learn the intuitions.

- Bishop, Model-based machine learning (Phil. Trans. R. Soc. A, 2013), a short and readable summary of the “model then inference” paradigm.

- Ghahramani, Probabilistic machine learning and artificial intelligence (Nature, 2015), a broader map that includes non-parametrics and probabilistic programming.

2026 note: this post was drafted in late 2016, when graphical models were the standard vocabulary for probabilistic modeling. The vocabulary is still the right one. What changed is that the likelihood is now routinely a deep network, and the posterior is now routinely approximated by another one. The scaffolding stayed. The bricks got bigger.

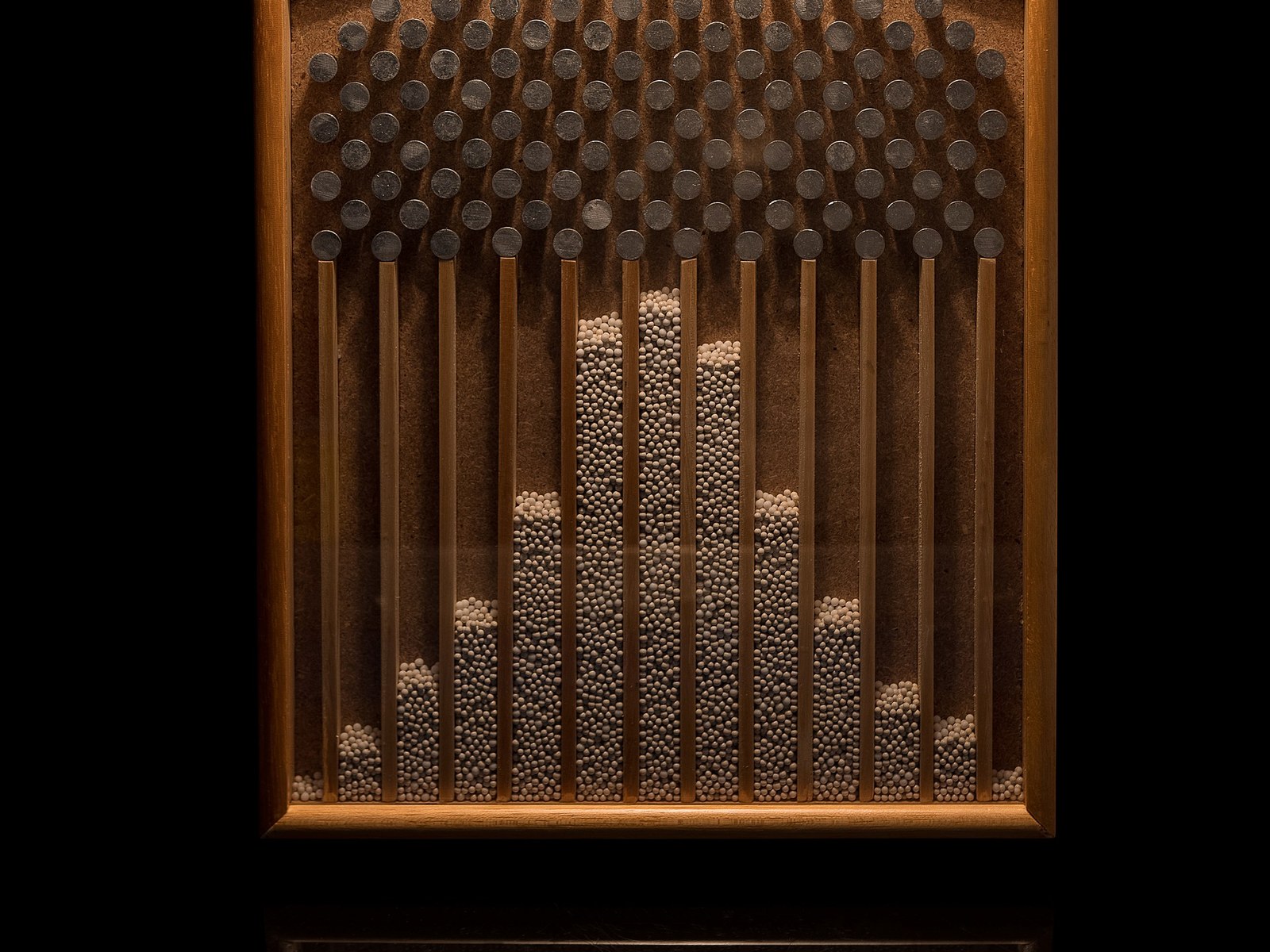

Cover image: Galton board (Matemateca IME/USP), photo by Rodrigo Argenton, CC BY-SA 4.0.