machine learning ·unified views ·bayesian

Seven textbook models are one linear-Gaussian model

PCA, factor analysis, ICA, Gaussian mixtures, vector quantization, HMMs, and Kalman filters are the same two equations with different restrictions on the latent variables. One EM recipe fits all of them.

Seven models in the standard textbook (PCA, factor analysis, ICA, Gaussian mixtures, vector quantization, hidden Markov models, and Kalman filters) are the same linear-Gaussian state-space model with different restrictions on the latent variable. Once you see that, the EM algorithm stops being seven separate derivations and starts being one derivation you reuse.

This is a companion to the earlier post on graphical models. That one said: most probabilistic models factor into a graph and compute with message passing. This one says: the linear-Gaussian corner of that space is a strip of country small enough to map on one page.

The two equations

The parent model is a discrete-time linear dynamical system with Gaussian noise:

is the hidden state, is the observation, is the transition matrix, is the emission (or generative) matrix, and are independent Gaussian noises with covariances and . Two facts make this model tractable. Gaussians stay Gaussian under linear operations, so every marginal and conditional is Gaussian. And the Markov property means each depends only on , so conditional on the latent chain the observations factor.

Seven standard models fall out by choosing what looks like and which parameters you freeze.

The seven costumes

Factor analysis. No dynamics (), a single snapshot instead of a sequence, continuous Gaussian , diagonal observation noise . The latent explains the correlations in ; the diagonal absorbs the rest.

PCA. Factor analysis with isotropic observation noise () and the noise sent to zero. The principal directions are the eigenvectors of the sample covariance.

ICA. Same static structure as factor analysis, but with a non-Gaussian prior on . Strictly this leaves the linear-Gaussian family, but the state-space template still tells you what to estimate.

Gaussian mixtures and vector quantization. No dynamics, but now is a one-hot categorical (a cluster assignment). The emission reduces to picking a cluster mean and adding Gaussian noise. Vector quantization is the hard-assignment limit where the noise goes to zero.

Hidden Markov model. Discrete with Markov dynamics ( becomes a transition matrix), and the emission is whatever distribution you want over .

Kalman filter and linear dynamical system. Continuous Gaussian , continuous , dynamics and emission both in play. This is the parent model written out with nothing switched off.

You can draw a 2x2 table with “static vs. sequential” on one axis and “continuous vs. discrete latent” on the other, and every cell is one of the above.

| Static ( is one snapshot) | Sequential ( evolves) | |

|---|---|---|

| Continuous latent | Factor analysis, PCA, ICA | Kalman filter, LDS |

| Discrete latent | Mixture of Gaussians, VQ | HMM |

One fitting recipe: EM

The reason this unification is useful in practice is that one algorithm fits all of them. EM (expectation-maximization) has two steps that keep the same shape across the seven models.

-

E-step. Given current parameters, compute the posterior over latents. For PCA and factor analysis this is a single Gaussian conditional. For mixtures this is a softmax responsibility per data point. For HMMs it is the forward-backward algorithm. For Kalman filters it is the Rauch-Tung-Striebel smoother. All four are instances of message passing on the same underlying graph.

-

M-step. Given the latent posteriors, fit the parameters by weighted least squares. The weights come from the E-step. For each specific model this reduces to a closed-form update: eigendecomposition for PCA, cluster mean and covariance for Gaussian mixtures, Baum-Welch for HMMs, and the Kalman-filter ML update for LDS.

Seeing EM once on the general linear-Gaussian model means you have derived all seven specific EM algorithms at once. The converse is also true: a reader who has only seen Baum-Welch can read the Kalman smoother update and recognize it as the same two steps.

A couple of subtleties

A few details are worth flagging because they trip people on first pass.

Degeneracy. In the general LDS, all the structure in can be absorbed into and , so you can safely assume is diagonal. The same is not true for , because is observed and you cannot rescale it freely.

What question are you answering? Fitting a linear-Gaussian model splits into two kinds of task. When the latent has a physical meaning (position in a tracking problem, phoneme in a speech problem) and the matrices are known from physics or from a pre-trained model, you are doing filtering or smoothing, and the quantity of interest is the posterior over states. When the latent structure is what you are trying to discover (the hidden factors in factor analysis, the clusters in a mixture model, the regimes of an economic time series), you are doing learning, and the quantity of interest is the parameters. EM handles both because it alternates between them.

Identifiability. Several of the parameter settings are equivalent up to an invertible linear map on the latent, because for any invertible . This is what makes factor analysis and PCA rotation-invariant, and it is what ICA exploits: a non-Gaussian prior on breaks the rotational symmetry and identifies the latent sources up to permutation and sign.

Why this matters in 2026

Linear-Gaussian state space models stopped being the headline once recurrent networks and transformers took over sequence modeling. They did not disappear. Two threads keep the family alive.

The first is that deep state-space models (S4, S5, Mamba, and the line of linear-recurrence architectures that followed) are linear-Gaussian LDS with structured matrices and learned nonlinear emissions. The HiPPO parameterization, the diagonal-plus-low-rank trick, and the selective state-space idea all land inside this family. Knowing the classical LDS makes it easy to read the new papers.

The second is that initialization and interpretation still lean on the linear-Gaussian base case. A deep sequence model often gets initialized near a linear-Gaussian solution, and its first-order behaviour on short sequences is approximated by the same Kalman-filter math.

The seven costumes are still worth knowing, because the body underneath did not change.

Reading

- Roweis and Ghahramani, A Unifying Review of Linear Gaussian Models (Neural Computation, 1999), the canonical reference for this framing.

- Bishop, Pattern Recognition and Machine Learning (2006), chapters 12-13, for worked derivations of each special case.

- Murphy, Machine Learning: A Probabilistic Perspective (2012), chapter 13, for the EM derivations side by side.

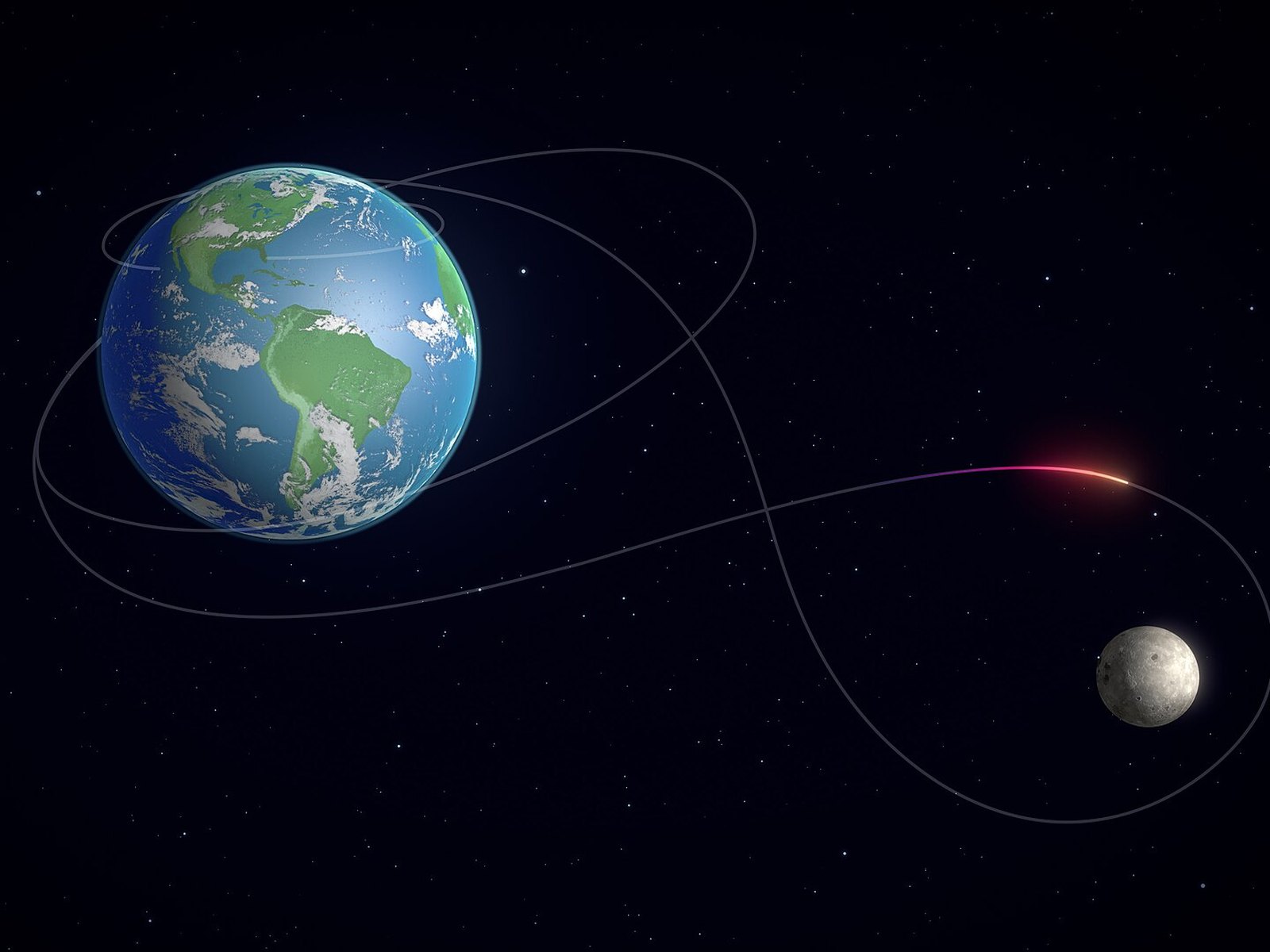

Cover image: Artemis-II lunar flyby trajectory, NASA’s Scientific Visualization Studio, public domain.